Appearance

Skip to content 5-Layer Platform.

Warrior AI — Platform Architecture

5-Layer Platform.

Designed to Scale.

A Firebase-authenticated gateway routes Warrior coaching sessions through 7 specialist Dify agents, backed by a Redis-cached Firebase Bridge and Qdrant vector store. Built for 50 users today, engineered for 10,000 at $3,444/mo.

Hono Gateway7 Dify AgentsFirebase AuthRedis CacheCloudflare Tunnel

5Architecture Layers

7Specialist AI Agents

10,000Target Concurrent Users

3–17sRequest Lifetime

80%+Redis Cache Target

$3,444Full Production / mo

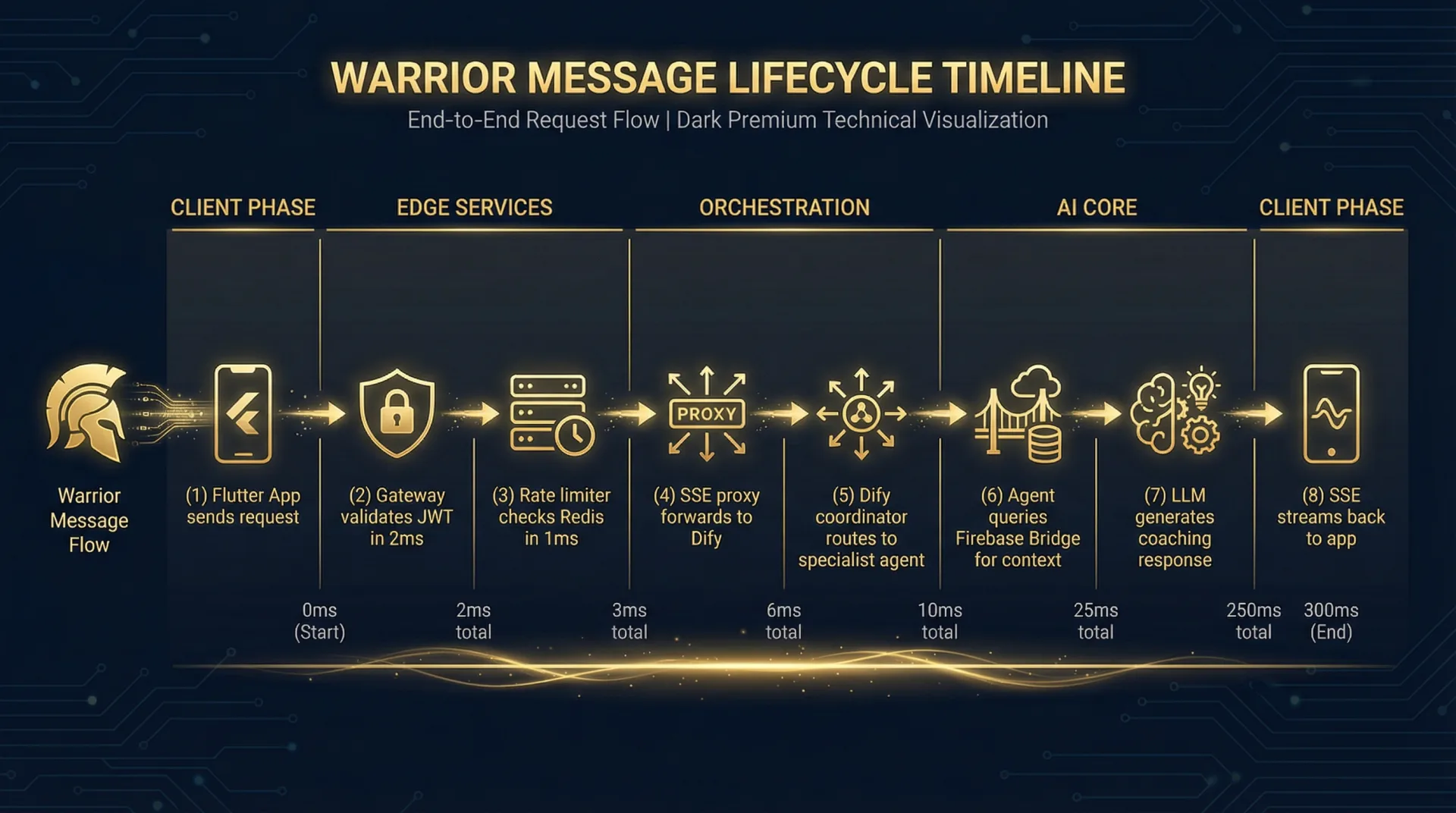

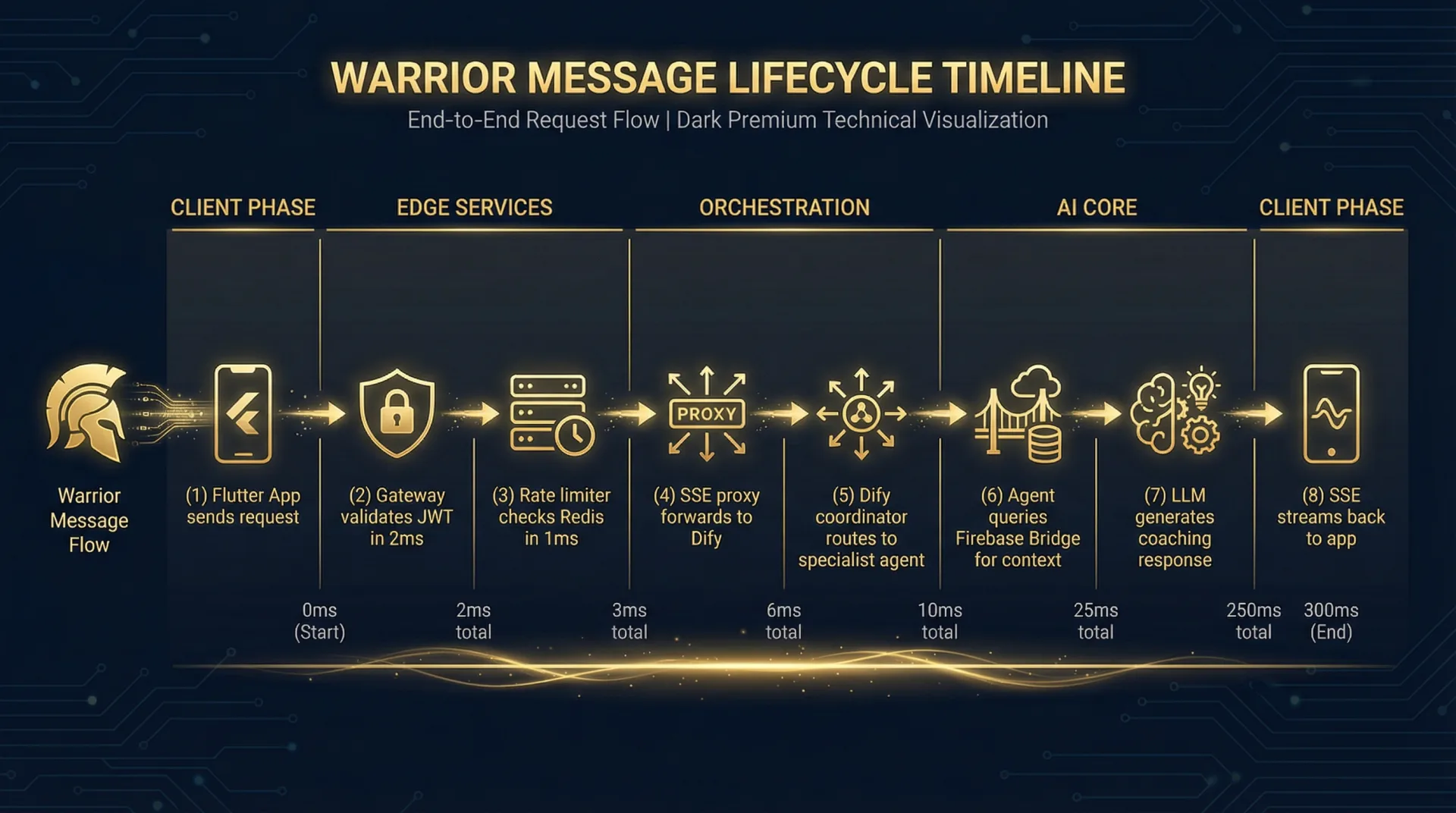

Full Request Flow

One coaching session, end to end. Total request lifetime: 3–17 seconds.

Flutter AppWarrior sends message. Stateless. Scales infinitely.

—

↓

Firebase AuthRSA-signed JWT verified. uid extracted. Token discarded.

50ms

↓

Hono GatewayBun runtime. CORS enforced. Rate limit checked (20 req/min/user). Dify handoff.

50ms

↓

Dify Context LoaderCalls Firebase Bridge for Warrior history. Redis cache: 5ms hit / 200ms miss.

5–200ms

↓

Dify Coordinator AgentRoutes to one of 7 specialist agents based on message intent. 80%+ routing accuracy.

500ms

↓

Specialist Agent + LLMQdrant RAG context fetched. LLM generates streaming coaching response.

2,000–15,000ms ⚠️ bottleneck

↓

SSE Stream → FlutterTokens streamed back through Gateway to the Warrior's app in real time.

ongoing

5-Layer Architecture

1

Flutter Mobile App

Client layer. Warrior-facing interface. Firebase Auth SDK handles token lifecycle. Sends authenticated HTTP requests with Bearer token. Receives SSE stream.

FlutterFirebase Auth SDKSSE Client

✓ Scales infinitely

2

Hono Gateway

Authentication and routing layer. Validates Firebase JWT, enforces CORS (WARAI-71), applies per-user rate limiting (WARAI-72), and forwards to Dify. Bun runtime handles 10,000+ concurrent HTTP connections natively — not a bottleneck.

HonoBunfirebase-adminTypeScript

✓ Not a bottleneck

3

Dify CE — AI Coaching Engine

Core AI layer. 11 containers: API server, Celery workers, nginx, PostgreSQL, Redis, Qdrant. Routes messages through Coordinator → 7 Specialist Agents. Celery workers are the primary scaling bottleneck — each LLM call occupies one worker for 2–15 seconds.

Dify CE 1.13.0CeleryPostgreSQLRedisQdrant

⚠ Primary bottleneck

4

Firebase Bridge

Firestore access layer. Exposes REST endpoints for reading/writing Warrior data. Redis-cached context snapshots (6 parallel reads, 80%+ hit rate, 5ms cache / 200ms cold). Zod schema validation on all writes. Not a bottleneck.

HonoBunfirebase-adminRedisZod

✓ Not a bottleneck

5

Firebase Firestore

Persistent data layer. Stores Power Stacks, Door Cards, Bible Stacks, Core4 scores, and Warrior context. Google-managed infrastructure, auto-scales. At 10,000 users: ~$220/mo read cost. Not a bottleneck.

Google FirestoreFirebaseGDPR Article 9 data

✓ Auto-scales

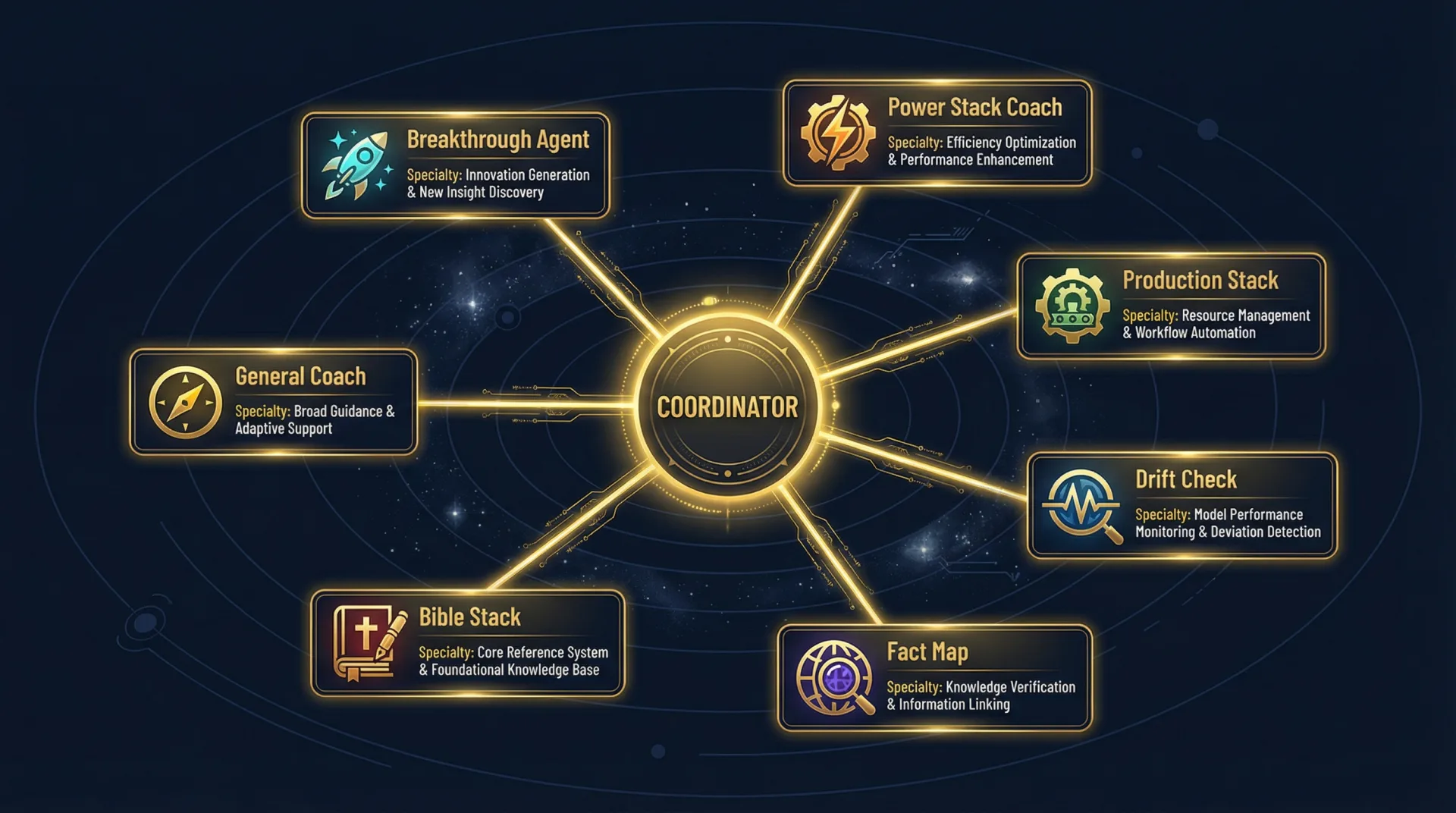

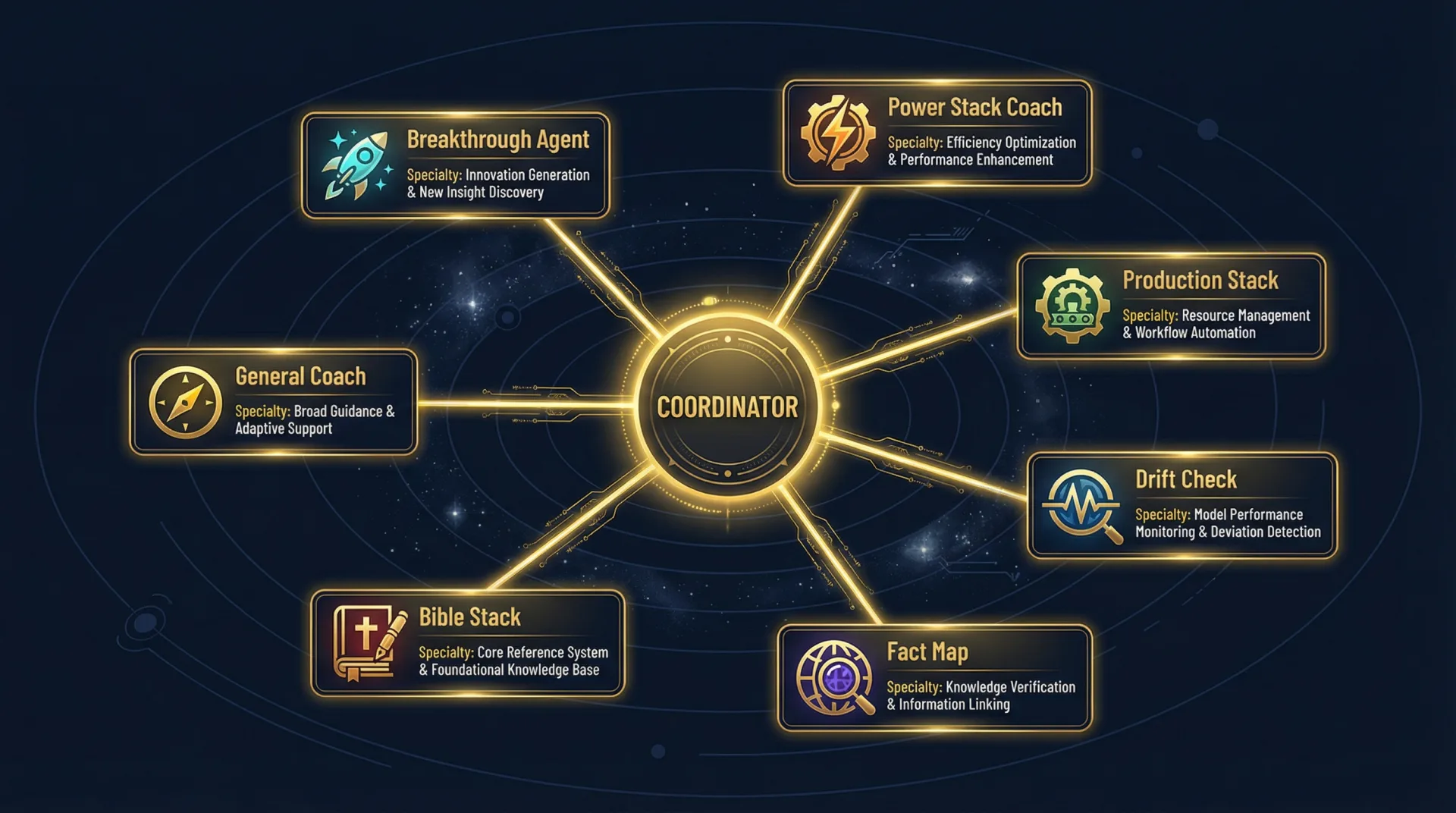

7 Specialist Agents

The Coordinator routes each Warrior message to the right specialist. 80% routing accuracy target (≥8/10 messages). LLM providers distributed across OpenRouter to manage rate limits.

Coordinator

Routes messages to specialist agents. Orchestrator — not user-facing.

Gemini 2.0 FlashPower Stack Agent

Body domain. Core4 performance, physical training, energy management.

DeepSeek ChatFact Map Agent

Being domain. Mental models, clarity, identity mapping.

Claude 3.5 SonnetBible Stack Agent

Being domain. Faith integration, spiritual clarity, scriptural application.

Claude 3.5 SonnetDoor Card Agent

Balance domain. Relationship frameworks, partnership, family leadership.

DeepSeek ChatBreakthrough Agent

Business domain. Decision-making, business vision, production breakthroughs.

Gemini 2.0 FlashDrift Agent

Recognizes drift patterns. Redirects Warriors back to core commitments.

DeepSeek ChatGeneral Agent

Fallback for unclassified messages. Maintains conversation continuity.

Claude 3.5 SonnetInfrastructure & Security Perimeter

🖥️ VPS — Vultr

- Ubuntu 24.04 LTS

- 4 vCPU / 12GB RAM / $72/mo (V0)

- Docker Compose stack

- All ports closed except via Tunnel

☁️ Cloudflare Tunnel

- Sole ingress path for user-facing traffic

- Cloudflare WAF → Tunnel → nginx → Gateway

- TLS terminated at Cloudflare edge

- DDoS protection at global scale

🔐 Twingate Zero-Trust

- Developer + admin access layer

- No VPN passwords — identity-based

- Staging VPS, Dify admin, SSH access

- Not for user-facing traffic (see WARAI-69)

🐳 Docker Network Isolation

- Bridge: gateway-network (Gateway + Bridge only)

- Bridge: dify-internal (Dify + Bridge only)

- Isolated: sandbox-isolated (dify-sandbox)

- Isolated: plugin-isolated (plugin daemon)

Security Hardening Status

| Item | Status | Notes |

|---|---|---|

| Firebase JWT validation | ✓ Live | firebase-auth.ts — token verified, uid extracted, token discarded |

| CORS origin restriction | ✓ Fixed (WARAI-71) | ALLOWED_ORIGIN env var — must be set in staging/production |

| Per-user rate limiting (Gateway) | ✓ Fixed (WARAI-72) | 20 req/min, configurable, Retry-After header on 429 |

| Bridge localhost-only binding | ✓ By design | Port 4000 bound to 127.0.0.1 — unreachable from internet |

| Zod validation on Bridge writes | ✓ Live | Field types, 5,000 char max, allowed enums |

| AI audit trail | ✓ Live | digital_trainer_stack: true on every AI write |

| nginx reverse proxy (WARAI-69) | 📋 Pending | Gateway must bind 127.0.0.1 + nginx in front |

| Bridge write rate limit (ADR-W021) | 📋 Pending | Redis INCR pattern, to implement before beta |

| HMAC-signed user_id (ADR-W026) | 📋 Proposed | Restores cryptographic trust through Gateway→Dify→Bridge chain |

| Docker network segmentation (ADR-W027) | 📋 Proposed | 5 named trust tiers — replaces flat network |

Scaling Roadmap: V0 → 10,000 Warriors

The primary bottleneck is Dify's Celery worker pool — each LLM call occupies a worker for 2–15 seconds. Every scaling stage targets this bottleneck.

Stage 0 — Current50 concurrent Warriors

VPS: 4 vCPU / 12GB / $72/mo

Celery: Default config (1–2 workers)

Parallel sessions: ~10–20

Action: None — suitable for demo and first 100 users

Stage 1 — Celery Optimisation150 concurrent Warriors

Cost delta: $0 (config change only)

Time: 2–4 hours

Change: 2 workers × 20 gevent concurrency = 40 parallel LLM sessions

Trigger: p95 > 5s under normal load

Stage 2 — VPS Vertical Scale400 concurrent Warriors

VPS: 8 vCPU / 32GB / $180/mo (+$108/mo)

Time: 1 day (Vultr resize, ~10 min downtime)

Change: 4 workers × 20 gevent = 80 parallel sessions

Stage 3 — Service Separation1,000 concurrent Warriors

Infrastructure: 3 servers / ~$450/mo total

Time: 1–2 weeks

Servers: Gateway ($72) · Dify AI Engine ($180) · Dedicated Redis ($48)

Change: 6 workers × 20 gevent = 120 parallel sessions

Stage 4 — Horizontal Dify Scale5,000–10,000 Warriors

Infrastructure: Load-balanced Dify cluster / ~$1,500/mo

Time: 4–6 weeks

Change: 3 Dify instances × 120 sessions = 360 parallel LLM sessions

Key: Shared Redis for session affinity across instances

Full Production Cost at 10,000 Users

| Component | Monthly Cost |

|---|---|

| Dify cluster (5 × $180) | $900 |

| Gateway / Bridge servers (2 × $72) | $144 |

| Redis cluster | $150 |

| PostgreSQL (managed) | $200 |

| LLM providers (OpenRouter) | ~$1,380 |

| Firebase Firestore reads | ~$220 |

| Deepgram STT | ~$400 |

| Load balancer / CDN | $50 |

| Total | ~$3,444/mo |